OCTOPUS

OCTOPUS(Osaka university Compute & sTOrage Platform Urging open Science)is a next-generation computing and storage infrastructure. This system is consist of general-purpose CPU nodes (NEC LX201 Ein-1: 140 nodes) equipped with two 6th generation Intel Xeon Scalable processors (Granite Rapids) and large-capacity storage (effective capacity: 3.58PB).

System Configuration

| Total computing performance | 2.293 PFLOPS | ||

|---|---|---|---|

| Node Configuration | General-purpose CPU Node Group 140 Nodes |

Computing performace per node: 16.384 TFLOPS Processor: Intel Xeon 6980P (Granite Rapids / 2.0GHz 128 cores) ×2 Main Memory: 768 GB |

|

| Storage | DDN EXAScaler (Lustre) | HDD: 3.58 PB | |

| Interconnect | InfiniBand NDR200 (200 Gbps) | ||

*The floating-point precision used to derive the theoretical computing performance is double precision (FP64).

How to use

Gallery

News

- We will begin paid services starting in December 2025. For details, please refer to this page.

-

September 2025: [press release] Operation Start of new OCTOPUS by NEC at D3 Center at University of Osaka.

Press Release -

August 2025: The free trial of the next-generation computing and storage platform OCTOPUS has started.

OCTOPUS Free Trial -

July 2025: The OCTOPUS Rack Design Contest was held.

(Applications are now closed. Thank you very much for the many submissions.)

OCTOPUS Rack Design Contest

mdxⅡ

“mdx II” is a system consisting of a cluster of compute nodes and storage, built on OpenStack. Each resource is provided through virtual machines, and independent environments can be created for each project. It can be used not only for supercomputer applications such as large-scale data processing and high-performance computing, but also for a wide range of flexible purposes, such as hosting data repositories and data platforms. mdx II is operated by the Data Utilization Society Creation Platform Collaborative Organization, providing services to educational and research institutions as well as private companies. Please see this page for a detail.

System Configuration

| Total Computing Performance | 2,534.58 TFLOPS | ||

|---|---|---|---|

| Node Configuration | Standard Compute Node Group 54 nodes (387.072 TFLOPS) |

・CPU: Intel Xeon Platinum 8480+ Processor (2.0 GHz, 56 cores) ×2 ・Theoretical Performance (per node): 7.168 TFLOPS ・Main Memory: 512 GB |

|

| Interoperable Node Group 6 nodes (43.008 TFLOPS) |

・CPU: Intel Xeon Platinum 8480+ Processor (2.0 GHz, 56 cores) ×2 ・Theoretical Performance (per node): 7.168 TFLOPS ・Main Memory: 512 GB |

||

| GPU Node Group 15 nodes (2,104.5 TFLOPS) |

・CPU: Intel Xeon Gold 6530 Processor (2.1 GHz, 32 cores) ×2 ・GPU: NVIDIA H200 ×4 ・Theoretical Performance (per node): 140.300 TFLOPS ・Main Memory: 1,024 GB |

||

| Storage Group | Lustre File Storage |

DDN ExaScaler ・Usable Capacity: NVMe 1,106.48 TB |

|

| Object Storage |

Cloudian HyperStore ・Usable Capacity: 432 TB |

||

| Interconnect | 200GbE Ethernet | ||

*The calculation precision used to derive the theoretical computing performance is "double precision."

Virtual Machines (CPU Pack)

Example: Applying for a 100 CPU pack allows you to use CPU: 100 virtual cores / Memory: 200 GB.

*1 virtual core is equivalent to 0.5 physical core.

| CPU Pack | |

| CPU Core Count | 1 virtual core |

| Memory | 2 GB |

| Theoretical Performance (per CPU pack) | Approx. 32 GFLOPS |

| Maximum Assignable CPU Packs per VM | 224 CPU packs (224 virtual cores) |

Virtual Machines (GPU Pack)

Example: Applying for 2 GPU packs allows you to use 2 GPUs / CPU: 60 virtual cores / Memory: 492 GB.

*1 virtual core is equivalent to 0.5 physical core.

| GPU Pack | |

| Number of GPUs | 1 GPU |

| CPU Core Count | 30 virtual cores |

| Memory | 246 GB |

| Theoretical Performance (per GPU pack) | Approx. 35 TFLOPS |

| Maximum Assignable GPU Packs per VM | 4 GPU packs (120 virtual cores) |

OCTOPUS

OCTOPUS retired on March 29, 2024.

OCTOPUS (Osaka university Cybermedia cenTer Over-Petascale Universal Supercomputer) is a cluster system starts its operation in December 2017. This system is composed of different types of 4 clusters, General purpose CPU nodes, GPU nodes, Xeon Phi nodes and Large-scale shared-memory nodes, total 319 nodes.

System Configuration

| Theoretical Computing Speed | 1.463 PFLOPS | |

|---|---|---|

| Compute Node | General purpose CPU nodes 236 nodes (471.24 TFLOPS) |

CPU : Intel Xeon Gold 6126 (Skylake / 2.6 GHz 12 cores) 2 CPUs Memory : 192GB |

| GPU nodes 37 nodes (858.28 TFLOPS) |

CPU : Intel Xeon Gold 6126 (Skylake / 2.6 GHz 12 cores) 2 CPUs GPU : NVIDIA Tesla P100 (NV-Link) 4 units Memory : 192GB |

|

| Xeon Phi nodes 44 nodes (117.14 TFLOPS) |

CPU : Intel Xeon Phi 7210 (Knights Landing / 1.3 GHz 64 cores) 1 CPU Memory : 192GB |

|

| Large-scale shared-memory nodes 2 nodes (16.38 TFLOPS) |

CPU : Intel Xeon Platinum 8153 (Skylake / 2.0 GHz 16 cores) 8 CPUs Memory : 6TB |

|

| Interconnect | InfiniBand EDR (100 Gbps) | |

| Stroage | DDN EXAScaler (Lustre / 3.1 PB) | |

* This is an information on July 21, 2017. Therefore, there may a bit of a discrepancy about performance values. Thank you for your understanding.

Gallery

Technical material

Please see following page:

ペタフロップス級ハイブリッド型スーパーコンピュータ OCTOPUS : Osaka university Cybermedia cenTer Over-Petascale Universal Supercomputer ~サイバーメディアセンターのスーパーコンピューティング事業の再生と躍進にむけて~ [DOI: 10.18910/70826]

SQUID

SQUID(Supercomputer for Quest to Unsolved Interdisciplinary Datascience), aka, (Supercomputer system for HPC and HPDA)

starts operation on May 1, 2021. This system is composed of different types of 3 clusters, General purpose CPU nodes, GPU nodes, Vector nodes. Total Peak performance is 16.591 PFLOPS.

This system is scheduled to end service on June 30, 2027.

System Configuration

| Total computing performance | 16.591 PFLOPS | ||

|---|---|---|---|

| Node Configuration | General purpose CPU nodes 1,520 nodes (8.871 PFLOPS) |

CPU:Intel Xeon Platinum 8368 (Icelake / 2.4 GHz 38 cores) 2 CPUs Memory:256GB |

|

| GPU nodes 42 nodes (6.797 PFLOPS) |

CPU: Intel Xeon Platinum 8368 (Icelake / 2.4 GHz 38 cores) 2 CPUs Memory: 512GB GPU:NVIDIA A100 SXM4 40GB 8 units |

Vector nodes 36 nodes (0.922 PFLOPS) |

Vector Host | AMD EPYC 7402P (2.8 GHz 24 cores) 1 CPU Memory: 128GB |

| Vector Engine |

NEC SX-Aurora TSUBASA Type20A(10 cores) 8 units Memory: 48GB |

||

| Storage | DDN EXAScaler (Lustre) | HDD:20.0 PB NVMe:1.2 PB |

|

| Interconnect | Mellanox InfiniBand HDR (200 Gbps) | ||

*The calculation precision used to derive the theoretical computing performance is "double precision."

How to use

Gallery

News

-

Please think about referring the following paper on SQUID when you publish a research result obtained using SQUID.

Supercomputer for Quest to Unsolved Interdisciplinary Datascience (SQUID) and its Five Challenges -

SQUID article was published on Intel WEB site.

Osaka University CMC Enables Large-Scale Research

SQUID opens new doors to national and global scientific collaboration with a heterogeneous architecture - In September 2022, a collaboration research using SQUID was introduced in the feature article of Nikkel Leaders Vision.

- A feature article on SQUID [DOI:10.18910/87667] was published in Cyber HPC Journal No. 11.

- The paper of AXIES2021 "ONION: Osaka University’s Data Aggregation Infrastructure" is now online.

- SQUID-CPU was ranked in 67th, 54th, and 57th in TOP500, HPCG and Green500 respectively in June 2021.

- We unveiled SQUID on May 19, 2021. Please see this page for a detail.

- SQUID service starts at 6 May. and we issued press release for SQUID.

- On Apr. 6 Intel has announced the third generation Intel Xeon Scalable processor (Ice Lake).

Two Intel Xeon Platinum 8368 (38C, 2.40GHz) are on each of general-purpose CPU nodes and GPU-nodes in SQUID. The detail information on the processor is available from the following web sites. (Osaka University is also mentioned in the articles.)

Intel's event(How Wonderful Gets Done 2021)

Supermicro's press release

HPCWire

- In the case that you plan to port your code running on the SX-ACE system to the one for SX-Aurora TSUBASA, you are advised to modifying a source code and collecting data related to your program running on SX-ACE in advance. The information is available from the the document below.

SX-Aurora TSUBASA移行にあたっての注意点 - Mr. Hashizume, Data Direct Networks Japan,introduced SQUID on PC Cluster Symposium 20th on 14 December 2020.

- Please see our press release issued on Nov 25 for a detail of SQUID.

ONION

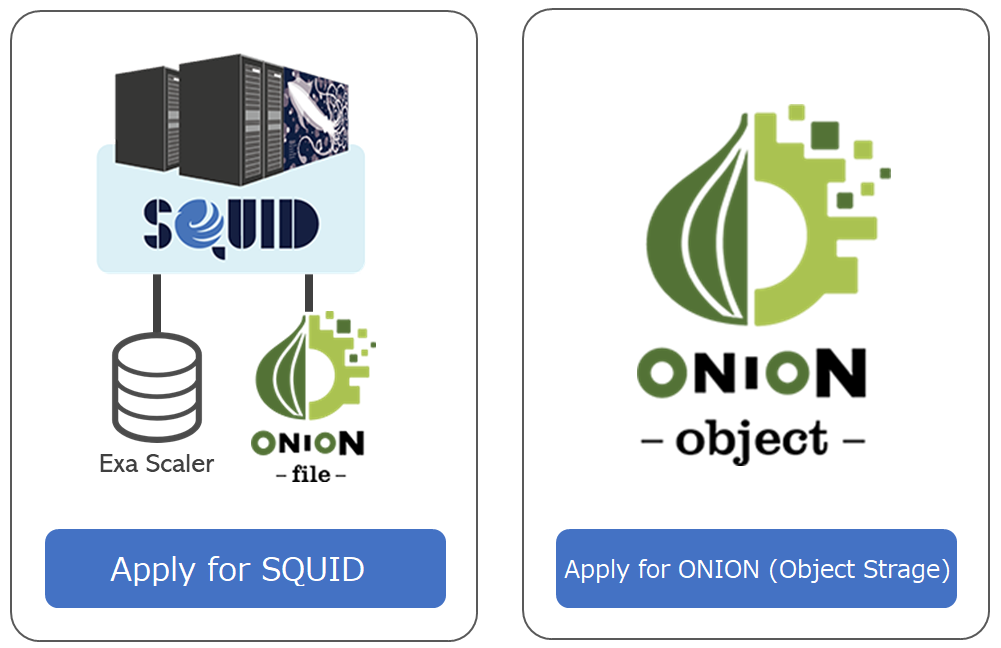

ONION(Osaka university Next-generation Infrastructure for Open research and open InnovatioN) is a data aggregation platform that is linked to SQUID. ONION consists of "EXAScaler" for a file system of SQUID,"ONION-file" for WEB storage service, and "ONION-object" for an object storage.

ONION makes it easy for you to transfer data between your PC and the supercomputer. In addition, ONION can be used in a variety of ways, such as immediately sharing calculation results with overseas or corporate collaborators who do not have a SQUID or OCTOPUS account, or manipulating data from a smartphone. Of course, it can also be used to store and share research data in the laboratory.

The following paper describes the background, system configuration, and details of the functions.

ONION Osaka University's Data Aggregation Infrastructure

Application for use

If you use ONION-object, you have to apply separately from SQUID. Plaease see this page for application, consult, or detail of service.

* Please refer to here for the usage fees of SQUID and ONION-object. ONION-object is referred to as "ONION (object storage)".

System configuration

EXAScaler

Main features

- Save, view, move and delete data

- Input and output data from SQUID calculation nodes

- Access data from client software that supports SFTP and S3

| effective capacity (HDD) | 20 PB |

|---|---|

| Effective capacity (NVMe) | 1.2 PB |

| Max number of inodes | about 8.8 Billion |

| Max assumed effective throughput (HDD) | Over 160 GB/s |

| Max assumed effective throughput (NVMe) | Write : Over 160 GB/s Read : Over 180 GB/s |

ONION-file

All operations and settings can be performed through a web browser. In default, only SQUID home area on EXAScaler is linked, but any external storage compatible with WebDAV, SFTP, and S3 can be linked. (For example, work area of OCTOPUS, and ONION-object described below can also be linked.)

The following operations can be performed on the linked storage from a web browser.

- Save, view, move, and delete data

- Publish URL, and share your data with those who do not use SQUID or ONION (Download / Upload).

ONION-object

Main Feature

- Save, browse, move, and delete data

- Operate objects and buckets with S3 API (some of them can be operated from a web browser)

| effective capacity | 950 TiB * We plan to expanse sequentially |

|---|---|

| data protection method | Erasure Coding (Data chunk:4 + Parity chunk:2) |

Notes

-

ONION-object is operated with the utmost care by Cybermedia Center and NEC Corporation, the vendor of SQUID, but data is not backed up. Therefore, there is a possibility of data lost due to system failure, unforeseen accidents, or natural disasters. Cybermedia Center will not be responsible for any data lost, so please back up all necessary files by yourself. In addition, please note that ONION-object is a trial service, and scheduled to be terminated at the end of April 2026 if no budgetary measures are taken by Osaka University.

How to use

How to use ONION

Gallery

public relations materials

SX-5

本システムの提供は終了しました。(提供期間:2001年1月-2007年1月)

SX-5/128M8は16個のベクトルプロセッサと128GBの主記憶を搭載したNEC SX-5/16Afの8ノードと、64Gbpsの専用ノード間接続装置IXS、800MbpsのHiPPIおよび1GbosのGigabit Ethernetによって接続したクラスタ型スーパーコンピューティングシステムです。

システム全体の仕様

| 理論演算性能 | 1.2 TFLOPS |

|---|---|

| ノード数 | 8 |

| CPU数 | 128 |

| 定格消費電力 | 443.36 kVA |

1ノードあたりの仕様

| CPU | NEC SX-5/16Af |

|---|---|

| CPU数 | 16 |

| メモリ容量 | 128 GB |

| メモリ帯域 | 16 GB/s |

| 理論演算性能 | 160 GFLOPS |

| 定格消費電力 | 55.42 kVA |